The relationship between causal and moral judgments

Image: Simon Stephan

Causal responsibility is widely regarded as a necessary precondition for moral and legal responsibility. In lawsuits, for example, a fundamental principle is that a defendant can only be held accountable for a negative outcome if her causal contribution can be established “beyond substantial doubt”. But recent studies suggest that the relationship between causal and moral reasoning is not unidirectional. In some situations, causal judgments seem to depend on the established normative principles (i.e., what is generally considered morally wrong or right or at least an established prescriptive norm in a context).

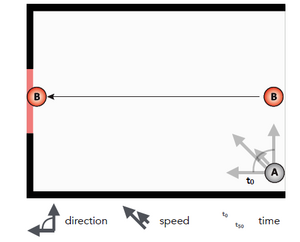

People seem to regard omissions as causal only when the context involves normative principles that suggest particular actions. Imagine you see someone drowning in a lake but decide not to help or that your neighbor asks you to water her flowers while she is on vacations, but you forget to do so and her flowers wither. Why do omissions seem to be causal in these cases but not in others? To answer this question, we must rely on counterfactual dependence theories that merely require that the effect counterfactually depend on the cause. But in the case of omissions, this leads to two distinct problems, an “underspecification problem” and a “causal selection problem”. As for the underspecification problem, to identify omissions that are causal we need to counterfactually substitute them with an action that would make a difference for the occurrence of the effect. In a first set of studies (see publication in right column) we addressed how people solve the underspecification problem. Together with Tobias Gerstenberg (MIT) we developed a “counterfactual simulation model of omissive causation”, that posits that people solve the underspecification problem by making counterfactual simulations that are constrained by the physical properties holding in the target environment and by the assumed physical “abilities” of the agents involved. Experiments in which we tested our new model in physically rather simple environments indicated a high fit between model predictions and subjects’ causal judgments. Our participants were asked to watch short animations in which two players played a game of marbles. One player (player B in the Figure) had the task to flip her marble through a gate (in some conditions the task was to make marble B miss the gate), while the other player’s task was to hinder player B’s marble from going through the gate (in some conditions player A was supposed to make marble B go through). In the target situation, participants observed that player A violated the rules of the game because she refrained from flipping her marble. The crucial test question that we asked our participants was how strongly they agreed that “Marble B missed [went through; depending on condition] the gate because player A did not flip her marble”. Our model predicted participants’ ratings based on a counterfactual simulation of several flippings of marble A and by computing the probability with which these flippings would have resulted in a different outcome (that marble B failed the gate or went through). We tested different scenarios in which this counterfactual probability varied depending on different features of environment and depending on the assumed capabilities of the player who omitted to act. We could show that participants’ causality judgments varied accordingly. We could also rule out several alternative explanations.